SEO, GEO & AI News: March 23rd – April 5th, 2026

AI Summary

This post introduces Quentin Yacoub's new recurring news digest format, which is designed to track the latest industry shifts in SEO, GEO, and AI automation. Readers can expect a curated selection of high-relevance stories and strategic insights that focus on practical automation workflows and the ongoing evolution of the search landscape.

Table of Contents

Cet article est aussi disponible en français : Actualités SEO, GEO & IA : 23 mars – 5 avril 2026

This is the first edition of my new format designed to track the latest industry shifts. I’ll be shipping these updates weekly when the news is as dense as this edition, or every two weeks otherwise. In this format, I’ll be sharing the specific stories I find most relevant for SEO/GEO strategy and AI automation.

I’d love your feedback on the format and the layout, so connect with me on LinkedIn.

SEO News

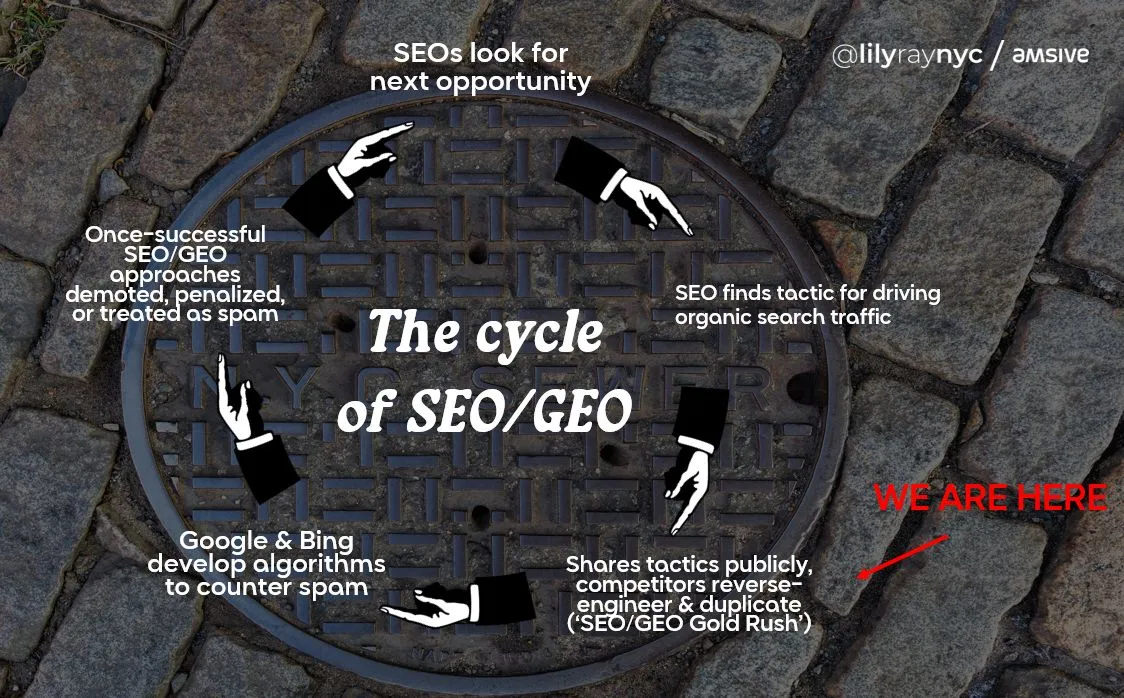

Your GEO Strategy Might Be Destroying Your SEO

Lily Ray, SEO Consultant, breaks down how popular GEO hacks are actually tanking the organic rankings that AI search depends on. Since LLMs use search indexes to find sources (RAG), losing your rankings means disappearing from AI answers too. She flags five major risks: AI content spam, fake last updated dates, self-serving listicles, prompt injection buttons, and mass-produced comparison pages. My take: Don’t trade your long-term organic foundation for a 3-month AI hype spike. Most traffic is still search-driven, and LLMs need those rankings to find you in the first place.

Google Releases March 2026 Spam Update

Google just rolled out the first spam update of the year. Surprisingly, it finished in less than 24 hours. While Google calls it a standard update, these usually target policy violations like scaled AI content and link abuse. I’m currently monitoring the data to see what’s actually moving.

Read the full postGoogle Rolls Out March 2026 Core Update

Google launched its March 2026 core update on March 27, a broad overhaul expected to take about two weeks to complete. Coming right after the recent spam update, it is still too early to gauge the total impact on search results. However, Lily Ray has already reported on X that several sites are seeing significant drops in their rankings.

Read the full postGooglebot's 2MB Fetch Limit for HTML

Gary Illyes, Analyst at Google, explains how Googlebot stops fetching any HTML file once it hits a 2MB limit, ignoring all subsequent bytes. It makes sense to me; crawling the web requires significant resources and energy, and this provides a clear incentive for developers to optimize their code.

Read moreWhy it matters: As an SEO specialist, indexation is a massive concern. We should verify if the pages we manage exceed 2MB and find ways to trim them if they do. To be honest, 2MB is a very high threshold for HTML. I would be surprised to find many pages above this limit but you never know, so it’s worth checking just in case. In the tools section, I’ve included a website to quickly check if a page is above 2MB.

Google adds digitalSourceType property to Forum and Q&A markup

Google Search CentralMarch 24, 2026Google updated its structured data guidelines for Q&A and Discussion Forums to include the digitalSourceType property. This allows sites to explicitly label content as human, AI-generated, or a hybrid of both to provide more clarity to Google’s ingestion systems.

Read the documentationWhy it matters: I've seen many new features like summary review boxes or automated FAQs. According to Google, it is now good practice to mention what is AI-generated. Personally, I don’t see the immediate value in adding it and won't be doing it yet, but it is worth noting.

GEO News

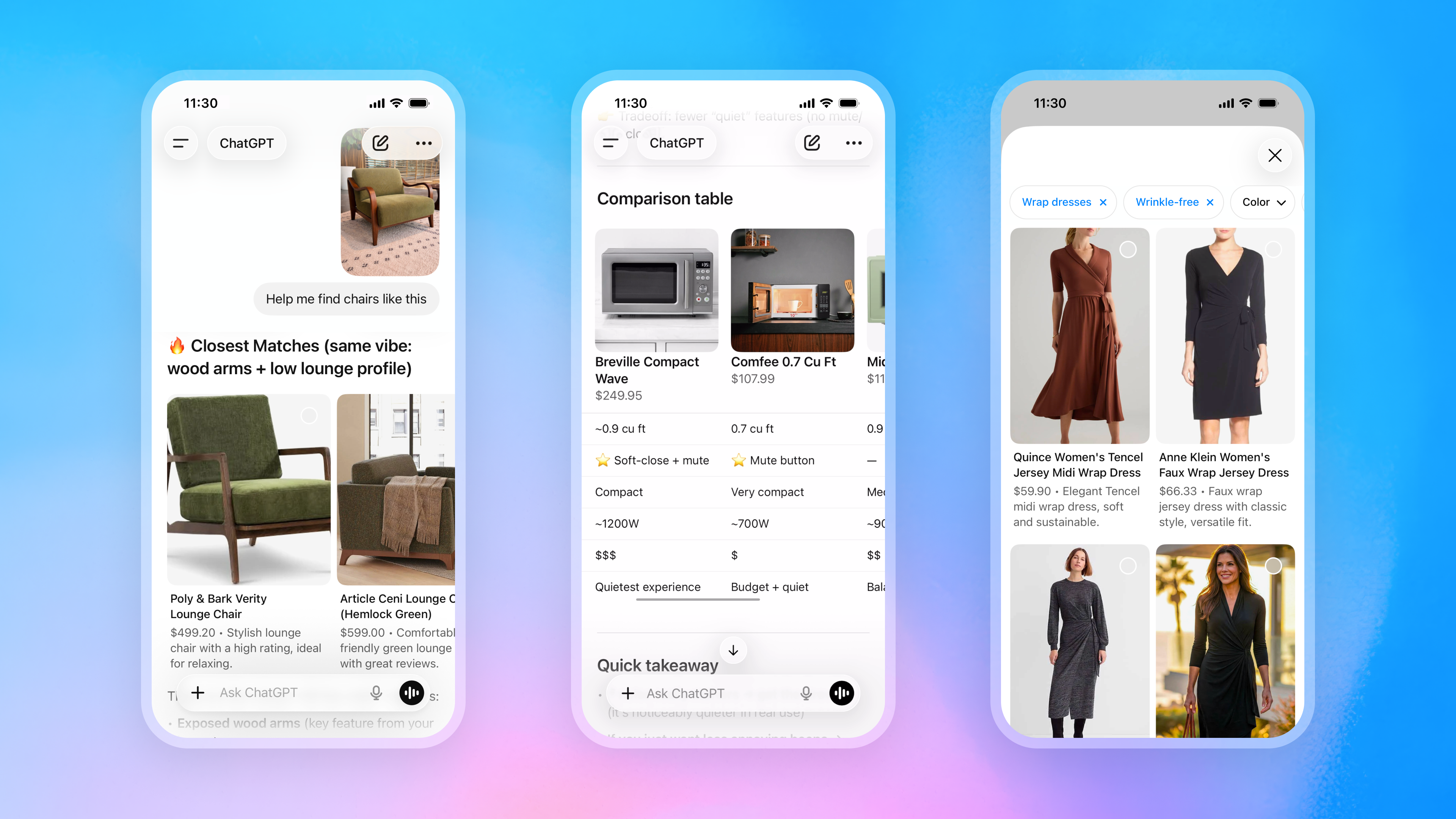

OpenAI Shifts its Agentic Commerce Strategy

OpenAI is scaling down its Instant Checkout model in favor of merchant-owned conversion paths. By expanding the Agentic Commerce Protocol (ACP), ChatGPT now facilitates visual product discovery and side-by-side comparisons by integrating direct merchant data feeds.

Why it matters: 1. This pivot is a reminder that our own websites remain our most important assets in 2026. 2. Treating OpenAI like Google by providing a merchant feed is a direct shortcut to increasing visibility within ChatGPT.

Walmart Pivots from ChatGPT Instant Checkout

Walmart’s pilot of OpenAI’s Instant Checkout resulted in conversion rates three times lower than traditional website click-throughs. Daniel Danker, Walmart’s EVP of Product and Design, described the experience as unsatisfying, leading the retailer to pivot toward its own integrated 'Sparky' chatbot model.

Read the full postThe End of the Listicle GEO Strategy?

Chris LongMarch 28, 2026Chris Long, Co-founder at Nectiv, describes how GPT 5.4 has refined its query fan-out process by searching significantly more sources and utilizing site: operators to verify brand features directly. This bypasses third-party listicles in favor of primary brand data and trusted aggregators like G2 or industry award winners.

Read the full LinkedIn postWhy it matters: This shift is ChatGPT’s first significant attempt to reduce the impact of manipulative tactics. It directly impacts GEO, as influencing LLMs through middleman comparison sites is becoming less effective than maintaining strong authority signals and trust data on your own domain.

Identifying User-Triggered AI Actions with Google-Agent

Google has added a new user-triggered fetcher, Google-Agent, to its documentation to identify AI agents performing specific actions like navigating sites or filling out forms on behalf of users. I’m looking forward to seeing this in our agentic traffic dashboards at LLM Optimizer to better understand what these agents are looking for and to make sure they are not blocked along their journey.

Read the documentationThe Risks of Cloaking for AI Agents

QueryBurstFebruary 19, 2026David McSweeney describes how Cloudflare’s Markdown for Agents makes web cloaking trivial by allowing sites to serve deceptive, poisoned content to agents while humans see a clean version. My take is that AI crawlers will eventually get as smart as Google and will penalize this practice once they recognize the disparity between these dual representations of reality. However, since many LLM crawlers still cannot execute JavaScript, this feature remains useful for websites that rely heavily on Client-Side Rendering.

Read the full testLLM Extraction Pipelines and HTML Parsing

QueryBurstFebruary 13, 2026In another great blog post, David McSweeney tested how LLM agents utilize extraction pipelines to parse data rather than reading raw HTML directly. He argues that cloaking pages for LLM crawlers is largely ineffective because these models already receive cleaned context through standard parsing layers.

Read the full testWhy it matters: 1. I have a better understanding of why serving markdown to AI crawlers doesn't necessarily improve performance. 2. This insight has led me to start pre-parsing HTML in my own tools to reduce token consumption and improve model performance.

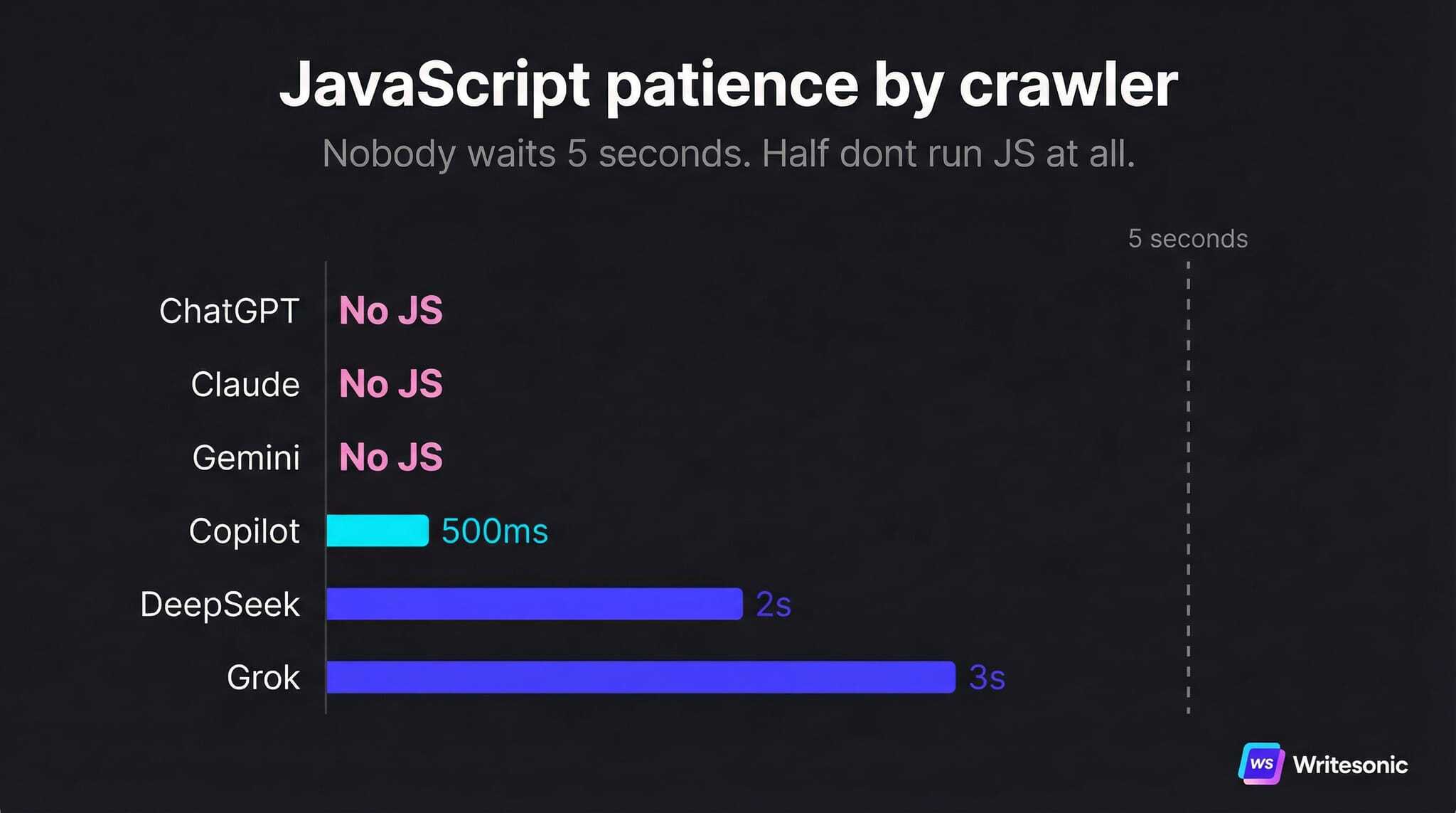

Writesonic study on how LLMs crawl and extract content

WritesonicMarch 30, 202610 minWritesonic conducted a test where they built a page with 62 hidden codes to see what LLMs would report. In short, ChatGPT, Claude, and Gemini don't execute JavaScript while JSON-LD, meta descriptions, and OG tags are stripped out in the extraction pipeline.

Read the full studyWhy it matters: 1. Structured Data (SD) may not be directly ingested by LLMs, but I still believe it creates an indirect visibility loop. LLMs use query fan-out and RAG to ground answers in web searches; because search engines rely on SD to categorize and rank content, optimized markup increases the odds of the site being a selected citation. I will be conducting my own robust test in the coming weeks to validate this correlation. 2. If your website relies on CSR and you don't want to serve a blank page to major LLMs, pre-rendered HTML is non-negotiable.

Adobe News

Marketing to Agentic Audiences

Rachel Thornton, Adobe’s enterprise CMO, describes how AI agents as a new audience require structured brand data for agentic intent. As an SEO/GEO specialist, I fully agree. It mirrors the work we do at LLM Optimizer: helping companies transition toward this new reality.

Tools News

EmDash: the new CMS from Cloudflare

Cloudflare has launched EmDash, a new CMS built on the Astro framework and positioned as the spiritual successor to WordPress. Its standout feature is a seamless migration path that allows you to move an entire site instantly via WXR files or a dedicated exporter. Having built my own blog using Astro, I can vouch for the incredible performance and personalization it offers; seeing that same foundation at the core of EmDash makes it a serious contender in the Cloudflare ecosystem.

SEO audit with Claude Code Skills

This article describes 9 open source SEO skills developed by Agrici Daniel to manage technical audits and AI search optimization. While these capabilities are often difficult to scale on massive sites or a portfolio, they offer a powerful way for auditing smaller projects.

Read the full postReverse Prompter Free Tool

Dan Petrovic has released a new free GEO tool called Reverse Prompting, designed to identify potential prompts for a specific page or from an LLM output. This is a great resource when you want to track LLM visibility but are unsure where to start. At LLM Optimizer we also provide prompts to our users based on several methods and it is always interesting to see a different approach to achieving this goal.

Test the reverse prompterFree 2MB Crawl Limit Checker

Will It CrawlWill It Crawl is a free tool to quickly check if a page exceeds the 2MB limit set by Googlebot. You can find more details about this limit in the SEO section. For those looking to audit pages en masse, Screaming Frog also provides this data on the validation tab by filtering for HTML Document Over 2MB to identify indexing risks, and it is free for up to 500 URLs.

Test the 2MB crawl limit checkerGitHub Repository with 30+ Marketing Skills

Corey HainesCorey Haines has released an open source library on GitHub featuring over 30 specialized marketing skills designed for AI agents like Claude Code. I am particularly interested in testing the SEO tools for schema and audits, the paid media skills for ad creative, and the CRO suite for optimizing signup flows and popups.

Go to GitHub repositoryAhrefs teases Agent A

Ryan Law, Director of Content Marketing at Ahrefs, has teased the upcoming release of Agent A, a new suite of tools designed for content optimization. I am looking forward to seeing how Agent A performs and comparing its capabilities with our own work at AEM Sites Optimizer!

Read one of his posts

Please note: All opinions and blog posts shared on this website are strictly my own personal projects. They do not represent the views, strategies, or official products of my employer, Adobe.

Hi, I'm Quentin, an SEO/GEO Specialist at Adobe building AI powered tools for Site Optimizer and LLM Optimizer. I use this site to document my thoughts on where search is heading in the age of AI, and to share the strategies and shortcuts I rely on.

Connect on LinkedIn