SEO, GEO & AI News: April 6th – April 12th, 2026

AI Summary

This post summarizes key industry shifts from April 6th to April 12th, 2026, focused on the transition to an AI landscape. Quentin Yacoub breaks down Google’s new shopping classifiers, domain visibility on GPT-5.4, and “Search Everywhere” strategies—with practical takeaways for navigating agentic commerce and LLM-driven discovery.

Table of Contents

Cet article est aussi disponible en français : Actualités SEO, GEO & IA : 6 avril – 12 avril 2026

The news has been very dense again this week. Between SEO analyses, the GEO hype, and the never-ending stream of new tools arriving thanks to AI coding assistants, there is a lot to keep track of!

This digest should help you save time and jump straight to the section that interests you most. If I had to pick just one, it would be the 12 LLM Ranking Factors in 2026, which is very comprehensive and provides an overview of the strategies available to improve your visibility on LLMs.

Have feedback on this digest? Connect with me on LinkedIn.

SEO News

5 Characteristics of Websites Winning in Google’s 2026 Landscape

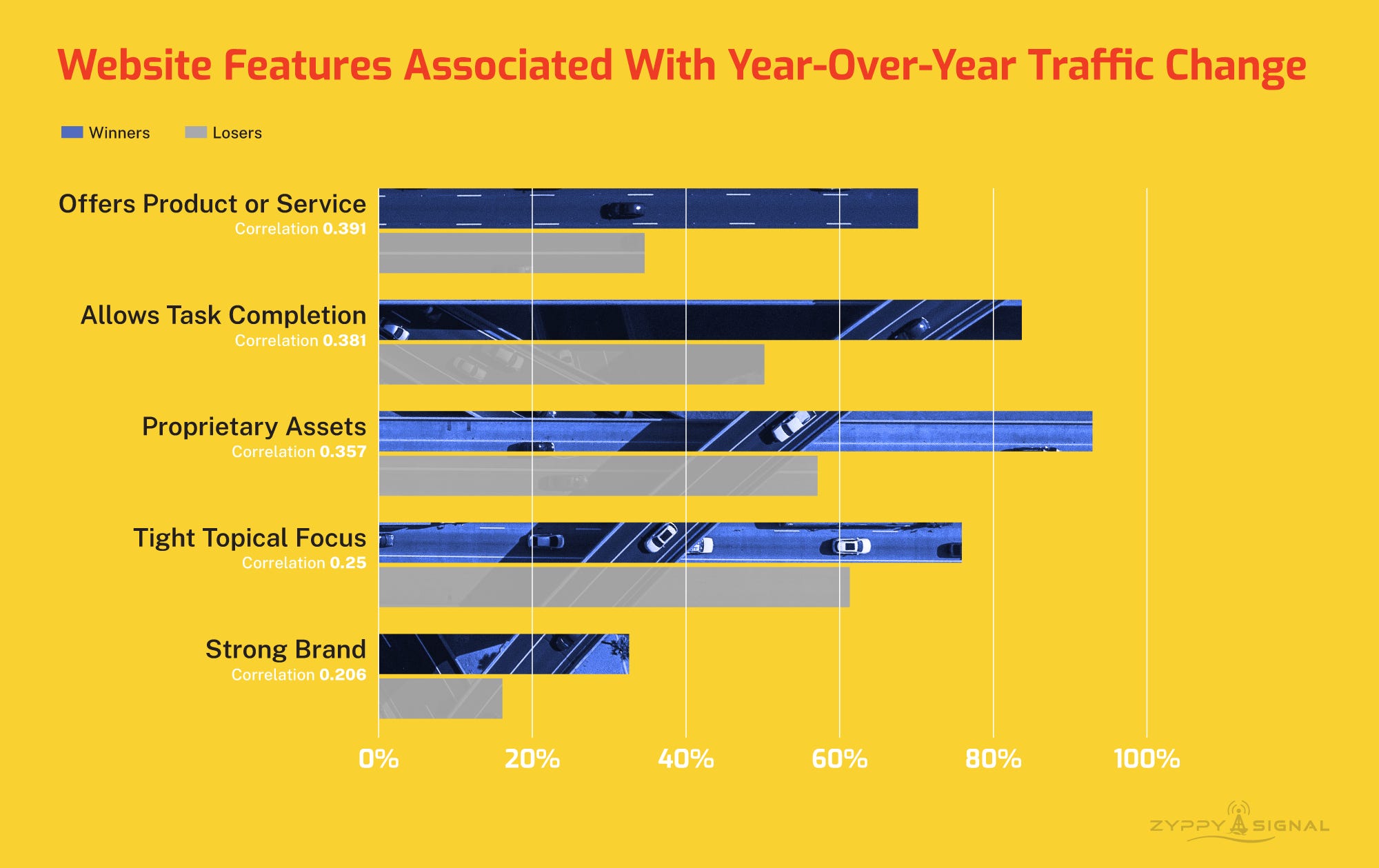

Cyrus Shepard identifies five core traits of search winners: offering a proprietary product or service, enabling direct task completion, owning unique assets, maintaining a tight topical focus, and building a strong brand. This data-backed analysis demonstrates that Google is increasingly rewarding sites that provide unique utility and authority beyond simple informational content.

Does AI Content Really Rank? New Data From 42,000 Blog Posts

Semrush examines the ranking performance of AI-generated versus human-written content by evaluating 42,000 blog posts using GPTZero. The research reveals that human-authored pages are 8 times more likely to secure the top spot. The findings suggest that while AI significantly boosts production speed, search engines consistently reward human originality at the highest levels of performance.

Read the Semrush studyReverse-Engineering Chrome’s New Shopping Classifier

Dan PetrovicApril 3, 2026Dan Petrovic and Olivier de Segonzac have reverse-engineered a new Google Chrome model that identifies when a user is visiting a shopping page. This classification allows Google to personalize user experiences and recommendations based on browsing history metadata. The system relies on a restrictive pipeline that only analyzes the first few hundred words of a page’s content.

Explore the shopping modelWhy it matters: Technical elements like large menus or cookie banners can prevent the model from identifying actual product data, and if Chrome fails to recognize a page, users will miss out on automated commerce tools. To remain detectable, I recommend ensuring the primary shopping signals are placed early in the content.

Google Resolves Year-Long Search Console Impression Inflation Bug

Google has initiated a fix for a logging error in Search Console that over-reported impression counts from May 13, 2025, through April 3, 2026. As this correction rolls out over the coming weeks, we should see a decrease in impressions, though click data and actual search performance remain unaffected.

Read the full postWhy it matters: If your reported impressions drop in the next few weeks, no need to panic as this represents a shift toward data accuracy rather than a performance decline. This reporting correction makes me believe that recent declines in CTR were likely a combination of technical inflation and not just the presence of AI Overviews.

GEO News

GPT-5.3/5.4 Visibility Shift

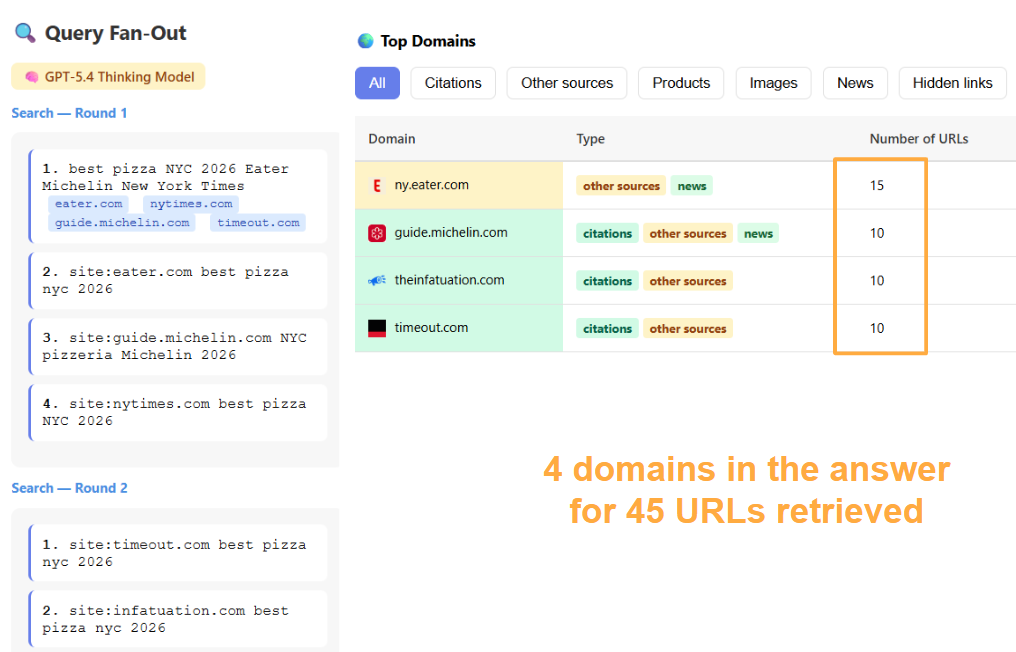

A study from Resoneo reveals a 20% decline in unique domains cited per ChatGPT Search response following the transition to GPT-5.3 and 5.4. While crawl depth remains stable, the models now favor high-authority domains over third-party intermediaries, mirroring the historical Bigfoot effect. This shift significantly reduces the overall visibility surface for the majority of websites.

Why it matters: The data validates observations by Chris Long (previous edition for more information) that ChatGPT is now employing site: operators to bypass intermediary listicles. This change requires a pivot toward building direct domain authority rather than relying on middleman-driven visibility.

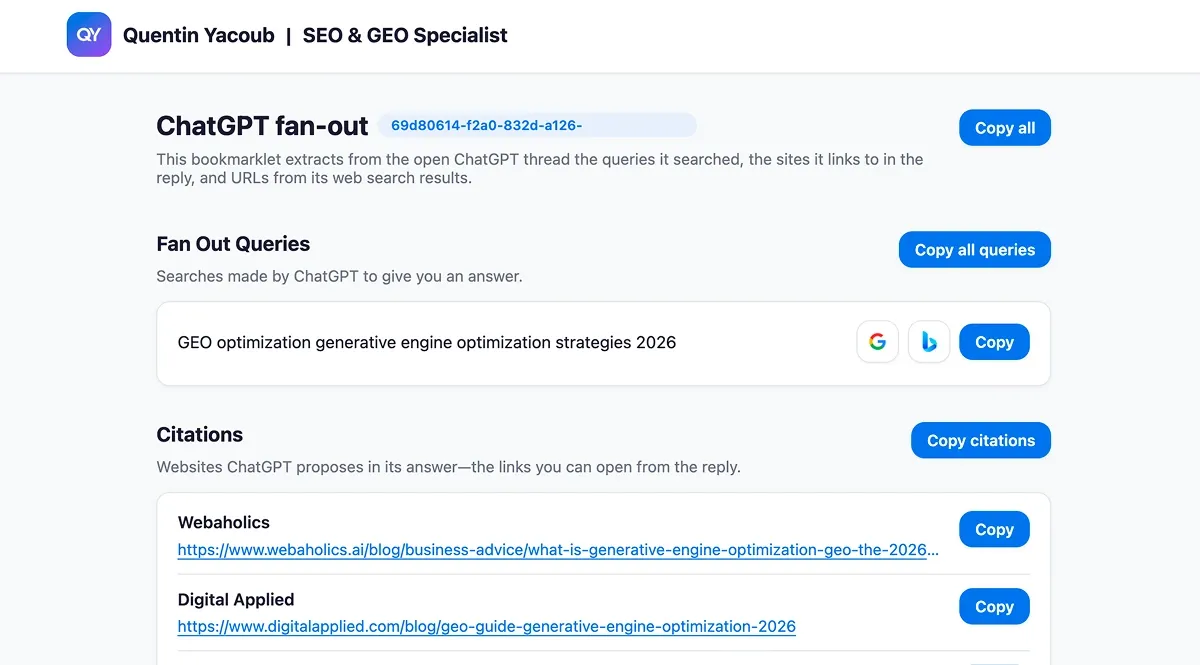

Unlocking ChatGPT’s Hidden Searches

Chris LongApril 9, 2026Chris Long shared on LinkedIn the return of fan-out queries in ChatGPT, allowing SEOs to track the hidden search terms the model uses behind the scenes. I created a bookmarklet to simplify the extraction of this data directly from any conversation. And if you want to do this at scale and on any LLM, LLM Optimizer is built for that.

Read the LinkedIn postWhy it matters: These queries allow us to track organic keywords for AI visibility, identify influential sources, and pinpoint content gaps.

ChatGPT Traffic Analysis

Semrush analyzed 17 months of data, finding that ChatGPT outbound referral traffic surged 206% in 2025 as total traffic plateaued near 1 billion monthly visits. While only 34.5% of queries currently trigger live web search, the platform has become a critical entry point for brands.

Read the full studyWhy it matters: These statistics confirm the shift toward LLM search. In my view, GEO is no longer an option for brands; it is now a critical requirement for maintaining digital visibility in this new landscape.

12 LLM Ranking Factors for 2026

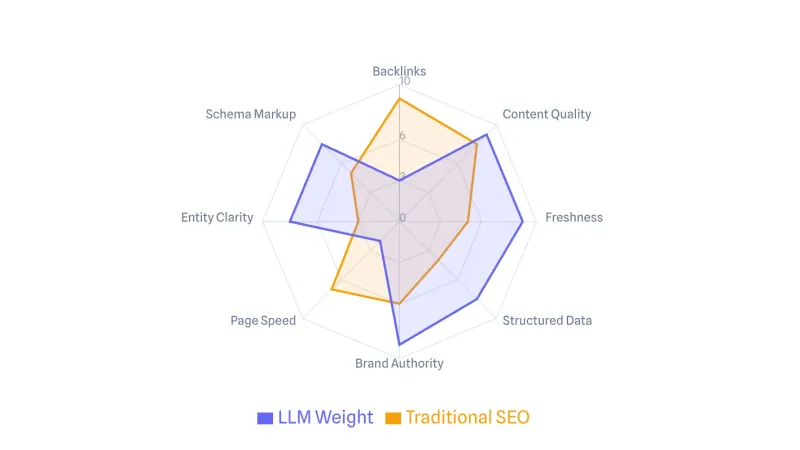

AISOApril 7, 2026This comprehensive guide breaks down 12 ranking factors to be visible on LLMs: Query Match, Freshness, Depth, Direct Answers, Structured Data, Entity Clarity, Heading Hierarchy, Stats/Citations, Tables/Lists, Topical Authority, Brand Search Volume, and Multi-Platform Presence.

Explore the 12 LLM Ranking FactorsWhy it matters: This blog post provides a roadmap for transitioning from traditional SEO to GEO.

How to Onboard to the Universal Commerce Protocol

Google SupportApril 8, 2026Google has released a formal onboarding guide for the Universal Commerce Protocol (UCP) to facilitate native AI-driven checkouts on Gemini and Google Search. The integration process requires merchants to complete a technical implementation and submit an interest form before gaining access to a sandbox environment for final validation.

Read the UCP Onboarding GuideWhy it matters: While ChatGPT recently stepped back from its instant checkout feature (see previous edition), Google’s push for UCP confirms that the industry is moving decisively toward agentic commerce.

AI Citations: Why Robots.txt Fails News Publishers

BuzzStreamMarch 28, 2026Analysis of 4 million news publishers' citations reveals that standard robots.txt directives are largely failing to prevent AI models from citing content. Despite these restrictions, 70.6% of sites blocking retrieval bots and 92.3% blocking training bots are still cited. This suggests that AI models are either bypassing crawler rules or using search engine results (SERP extraction), third-party archives like Common Crawl, and existing datasets.

Read the full study on AI crawlersWhy it matters: 1. Any site serious about restricting AI access should implement blocking at the host or CDN layer to ensure the directive is enforced. 2. It's a reminder that we should now audit a publisher's crawler rules before investing in Digital PR, as backlinks from sites that successfully block AI will fail to contribute to your visibility in LLMs and GEO results.

ChatGPT (GPT 5.4) Prioritizes .com Domains in Query Fan-Out

Chris LongApril 7, 2026Chris Long identifies a new behavior in GPT 5.4 where the model utilizes site:.com operators during query fan-out to prioritize content from specific top-level domains. This discovery suggests that LLMs may increasingly treat the .com TLD as a primary trust signal when sourcing authoritative information for citations and recommendations.

Read the full LinkedIn postWhy it matters: 1. This research focused on a US location. I suspect ChatGPT might adapt this behavior to other regions by prioritizing local TLDs like .ca or .fr. 2. This could mean that modern extensions like .ai or .co may see lower visibility. It warrants a larger-scale study to see how deep this bias goes.

Adobe News

The Shift from Ranked Links to AI Synthesis

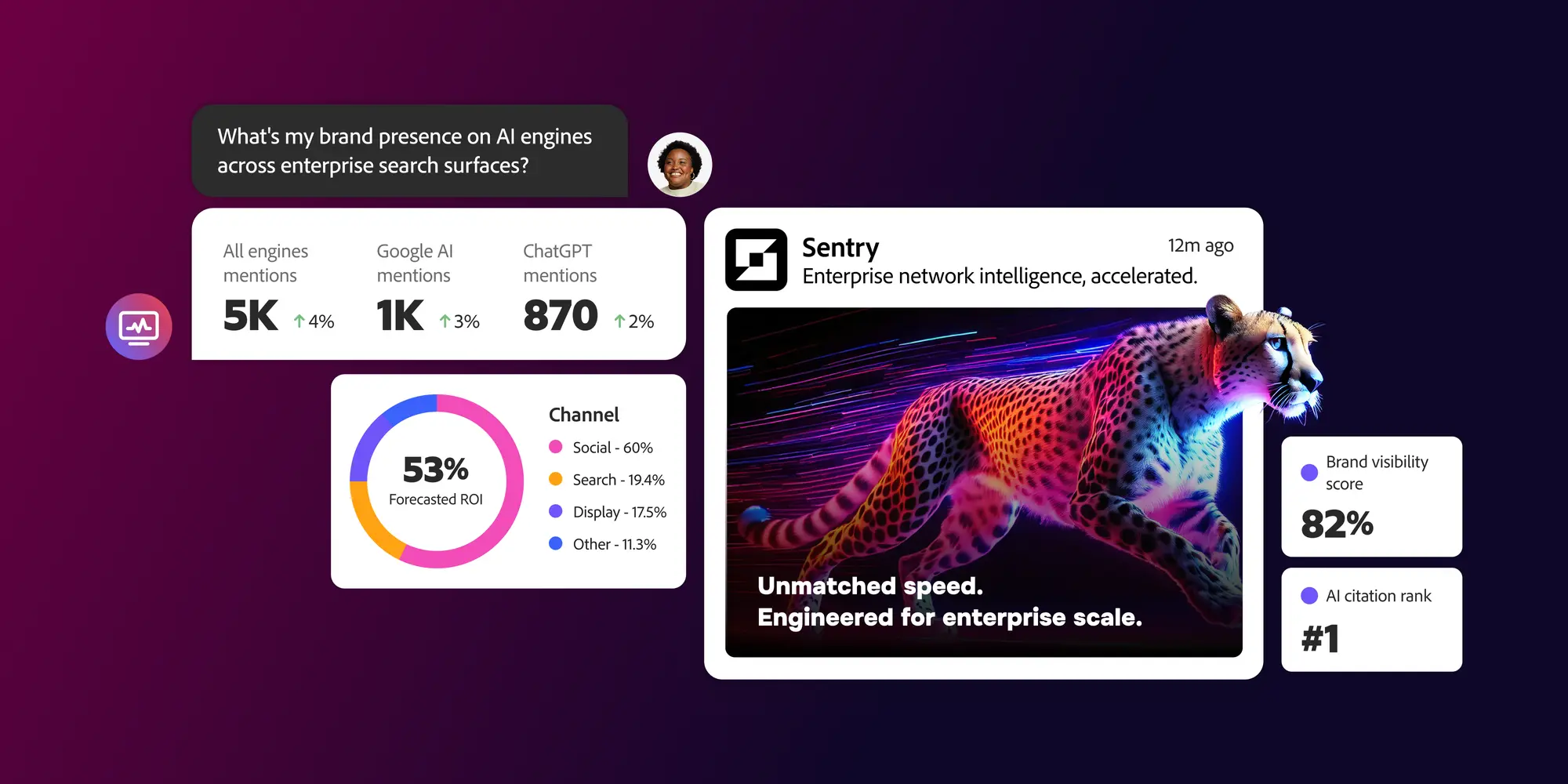

High level blog post highlighting that traditional search is evolving from a list of ranked links to a synthesis-first environment where AI-generated overviews dominate the discovery process. To remain visible, enterprises must pivot toward content extractability and verifiability to ensure their brand is accurately represented and cited within LLM responses. LLM Optimizer is the tool built by Adobe to allow large enterprises to make this shift smoothly

Adobe's 'Search Everywhere' Playbook

Adobe for BusinessApril 9, 2026As AI-driven referrals grow more than tenfold, this guide explains why traditional SEO must evolve into a surface-agnostic 'Search Everywhere' strategy. It provides a practical framework for maintaining brand visibility across search engines, social platforms, and generative AI assistants where discovery now happens without traditional clicks.

Read the full guide

Tools News

ChatGPT 5.3/5.4 Fan-out Extraction Bookmarklet

I've created a free tool designed to extract query fan-out and citations directly from ChatGPT conversations. By visualizing how the AI deconstructs prompts into specific search queries, SEO and GEO specialists can better understand the sources and reasoning behind generated answers.

Google Chrome DevTools MCP

Google Chrome DevTools MCP allows AI coding assistants to control and inspect a live Chrome browser. By integrating this tool, developers can automate complex browser tasks, perform in-depth debugging, and generate detailed performance analysis directly through the AI agent.

Explore Chrome DevTools MCP on GitHubBuilding Semantic Vector Embedding Libraries for Advanced SEO

SVEL is a free tool developed by David Capone that transforms collections of web pages into searchable semantic libraries using vector embeddings. By leveraging the Google Gemini API, it identifies thematic similarities between pages to assist with internal linking, content gap analysis, and recommendation systems.

Test the toolAll-in-One Browser Designed for SEO/GEO specialists

SERP Lens is a specialized web browser developed by consultants Sam Underwood and Brodie Clark to streamline SEO workflows. It integrates location and device emulation, technical site analysis, and AI search insights into a single interface to replace fragmented browser extensions.

Test SERP LensGit Repo - OpenDataLoader PDF

OpenDataLoader PDF is an open-source parser capable of converting documents into AI-ready Markdown and JSON. It accurately decodes complex tables and nested structures with high precision.

Go to Git repoWhy it matters: This tool is an opportunity to convert client documentation into actual web pages or to build a RAG system to fact-check content against source documents.

Visualizing Search Console Data for Topic Strategy

QueryDrift automatically clusters Search Console queries into distinct topics and visualizes them through an interactive map for deep-dive subtopic analysis. The tool calculates overall site focus and provides data-driven insights to help users build a comprehensive and aligned topic strategy.

Explore QueryDrift Features

Please note: All opinions and blog posts shared on this website are strictly my own personal projects. They do not represent the views, strategies, or official products of my employer, Adobe.

Hi, I'm Quentin, an SEO/GEO Specialist at Adobe building AI powered tools for Site Optimizer and LLM Optimizer. I use this site to document my thoughts on where search is heading in the age of AI, and to share the strategies and shortcuts I rely on.

Connect on LinkedIn